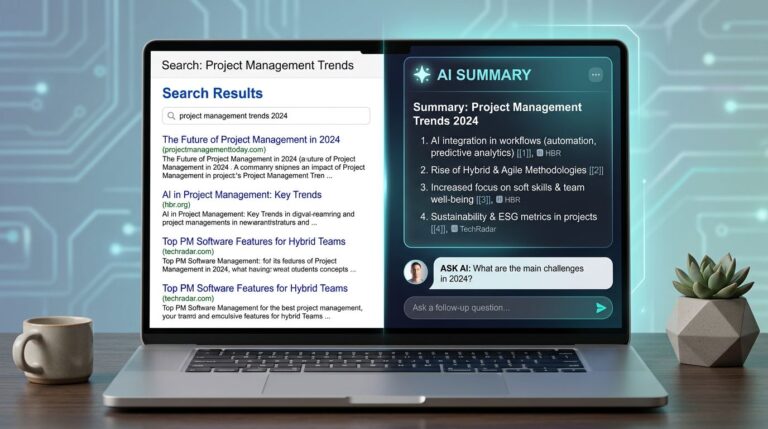

When someone asks an AI tool, “What’s your parental leave policy?” your careers page doesn’t get a second chance. The answer appears like a posted note on the office door through AI Overviews and Featured Snippets, as Large Language Models directly answer user search intent; short, confident, and often final.

That’s why AI answer visibility metrics matter for HR. You’re not only competing for clicks anymore. You’re competing for AI visibility with the wording that candidates and employees repeat to each other.

This guide breaks down practical AEO KPIs for HR teams, plus prompts, scoring, dashboard ideas, and governance that keep answers accurate and safe.

Why HR needs AI answer visibility metrics (not just traffic)

Traditional HR analytics often starts with pageviews and ends with “people seem to find it.” AI changes that. An answer engine can provide zero-click searches that summarize your PTO rules without sending a visitor to your site, and a slightly wrong summary can travel fast inside Slack threads and interview prep chats.

In March 2026, the trend is clear: HR leaders need reporting that connects AI visibility to outcomes like applications, qualified candidates, and fewer tickets to HR Ops. Recruitment teams are already adjusting job content for AI discovery using Generative Engine Optimization and Answer Engine Optimization, as outlined in guides like Joveo’s overview of GEO and AEO for recruitment. Meanwhile, marketing teams track whether the brand gets mentioned and cited, not only visited.

A helpful way to think about it is a front desk. Your site is the office, but AI answers act like the receptionist. If the receptionist gives the wrong directions, it doesn’t matter how nice the office is.

Two shifts matter most:

- From ranking to referencing: You want AI systems to quote, cite, or clearly attribute your policy source using schema markup for better AI citations. For a primer on how citations work in Large Language Models, including answer-first content structure to meet user search intent, see how to get cited by AI search.

- From content to compliance: HR answers include regulated claims (benefits, pay ranges, leave rights). You need a measurement loop that spots drift before it becomes a candidate complaint or an employee relations issue.

Treat AI answers like published HR comms, because the audience reads them that way.

Once you accept that, AEO measurement becomes less abstract. It turns into a weekly routine: test prompts, score answers, fix sources, re-test.

The AEO KPI table for HR policies, benefits, and recruiting pages

Use the table below as a starting set of AEO KPIs drawn from Answer Engine Analytics. It’s designed for HR policies, benefits pages, DEI statements, and recruiting content.

| KPI name | Definition | How to calculate/score | Data sources/tools | Reporting cadence | Common pitfalls |

|---|---|---|---|---|---|

| Visibility Score | Composite measure of how prominently HR policies and benefits appear in AI answers | Weighted average (presence 40%, citations 30%, position 30%), normalized 0-100 | Answer Engine Analytics platforms, prompt test logs | Weekly | Failing to track brand mentions in low-visibility answers |

| Average Position | Mean ranking of your HR content in AI response listings or carousels for relevant queries | Sum of positions divided by number of appearances | AI SERP trackers, manual prompt simulations | Weekly | Skewed by variable AI Traffic volumes across models |

| AI referral traffic | Visits to HR pages originating from AI search surfaces or answer experiences | Count or percentage of traffic tagged as AI sources | Web analytics (GA4), ATS referrals, server logs | Monthly | Hidden referrers in AI Traffic; use assisted conversion tracking |

| Entity Authority Score | AI-evaluated authority of your organization as the source for HR policy topics | Aggregated score from entity recognition models | Entity SEO tools, Answer Engine Analytics APIs | Biweekly | Low scores despite strong brand mentions; add schema markup |

| Answer Capture Rate | Percentage of target HR queries where your content powers the AI response | (# prompts captured) / (total tested prompts) | Prompt engineering tools, brand monitoring | Weekly | Biased testing; overlook conversational brand mentions |

| LLM Impressions | Estimated exposures of your HR content to large language models during query processing | Proxy impressions from tracking or volume estimates | LLM observability platforms, search console proxies | Monthly | Inflated estimates without tying to actual AI Traffic |

| Machine-validated authority | ML-confirmed credibility of HR content for policy facts and recruiting details | Score from automated validators (0-1 scale) | Custom ML models, third-party authority checkers | Monthly | Models missing HR nuances; cross-check with AI Traffic quality |

| Semantic density score | Concentration of key HR entities, terms, and concepts for optimal AI parsing | NLP-derived score per page (0-10) | Semantic analysis tools, content audits | Biweekly | Prioritizing density over user readability |

| Vector index presence | Frequency your HR pages register in AI vector databases and indexes | % of tested indexes showing presence | Vector database APIs, retrieval testing tools | Monthly | Presence without ranking power; monitor via AI Traffic |

| Chunk retrieval frequency | How often specific HR content chunks are pulled into AI answers | Retrieval count or rate from observable logs | RAG system logs, proxy metrics like query volume | Weekly | Frequent retrievals lacking context; validate with AI Traffic trends |

For broader context on AI visibility measurement, it can help to compare approaches described in AI visibility metrics worth tracking and adapt them to HR’s risk profile.

The practical takeaway: pick a small set that balances visibility (presence, citations) with truth (accuracy, alignment) and impact (tickets, applicants, time-to-hire).

Prompts, scoring rubric, dashboards, and governance for HR AEO

Example prompt sets HR teams should test every week

Keep prompts plain, because employees type plainly. Mix “candidate voice” and “employee voice,” and include messy wording.

- Benefits: “Does CompanyName offer health insurance on day one?” “What’s the HSA contribution?” “Do part-time employees get benefits?”

- PTO: “How much vacation do new hires get?” “Can I carry over PTO?” “What happens to PTO when I leave?”

- Parental leave: “Is parental leave paid?” “How long is maternity leave?” “Does it cover adoption?”

- Salary bands: “What’s the pay range for Senior Analyst?” “Do you share salary ranges in offers?” “How do promotions affect pay bands?”

- Interview process: “How many interview rounds?” “Do you do take-home assignments?” “How long until a decision?”

- DEI statements: “What does CompanyName do for accessibility?” “Do you publish workforce demographics?” “How do you prevent hiring bias?”

Run the same set across the AI tools your audience uses most for Answer Engine Optimization, then store screenshots or exports to track AEO KPIs and maintain audit trails.

Answer quality scoring rubric (simple enough to use)

Use a 1 to 5 scale, and require a minimum pass score before you consider a topic “safe.”

| Criterion | 1 (Poor) | 3 (OK) | 5 (Excellent) |

|---|---|---|---|

| Accuracy | Wrong facts | Mostly right, minor errors | Fully correct to current policy |

| Completeness | Missing key details | Covers basics, misses exceptions | Covers basics plus key edge cases |

| Policy alignment | Conflicts with policy terms | Uses similar language | Matches policy wording and rules |

| Brand mention (when relevant) | No brand context | Mentions brand once | Clear brand attribution without fluff |

| AI citations | No source | Vague source reference | Clear citation to the correct page |

If an answer scores high on clarity but low on accuracy, treat it as a priority bug, not a “content opportunity.”

Building a dashboard HR leaders will trust

A useful AEO dashboard looks like a cockpit, not a scrapbook. Keep it tight:

- Visibility panel: Presence rate, citation rate, attribution rate, source analysis, brand mention share (weekly trend).

- Quality panel: Accuracy and alignment scores by topic (PTO, benefits, pay, interviews).

- Impact panel: Ticket deflection, application starts, qualified candidate rate, conversion velocity, time-to-hire trend.

Then connect dots with short annotations. For example: “PTO carryover page updated Feb 12, accuracy score rose from 2.8 to 4.6, PTO tickets dropped 18% in three weeks.” Your numbers will vary, but the story format should stay consistent.

For extra framing on how AEO differs from classic SEO reporting, skim a practical guide like Answer Engine Optimization (AEO): AI visibility, then translate the concepts into HR-safe measures.

Privacy, security, legal, and governance (where HR can’t wing it)

HR AEO work touches sensitive areas fast. Put guardrails in place:

- Privacy: Never test prompts with real employee identifiers. Also, avoid pasting internal cases into public tools.

- Regulated claims: Treat salary bands, leave rights, and benefits eligibility as legal-adjacent. Route changes through the right reviewers.

- Bias and fairness: If AI supports hiring decisions, document audits and keep human oversight, because many jurisdictions expect it.

- Technical oversight: Use schema markup to improve AI visibility; recognize the parallel surface of visibility where AI tools mirror site content.

Governance doesn’t need a committee of 20. It needs ownership:

- Assign a policy owner per topic (benefits, PTO, leave, pay transparency).

- Set a review cycle (quarterly for stable policies, faster during open enrollment).

- Keep a single source-of-truth page per policy, then point other pages to it.

Conclusion

AI answers have become the hallway posters of HR, seen quickly and repeated often. When you track AEO KPIs through Answer Engine Optimization that balance AI visibility, accuracy, and outcomes, you can prove value without guessing while driving AI Traffic. Start small: define your prompt set, score answers weekly, and fix the policy sources that drive the biggest risk. Then tie improvements to fewer tickets, stronger candidate quality, faster hiring, and higher AI referral traffic as you shift away from zero-click searches. The teams that win in 2026 will treat AI answer quality like any other HR promise, clear, current, and accountable.